Monitoring Your Applications in AWS

Building out a sufficient monitoring suite is critical to measuring the performance and availability of your services. The best time to start thinking about monitoring your applications is before it goes to production. There are a plethora of different tools at your disposal in the AWS ecosystem to configure application monitoring and alerting.

Where to begin

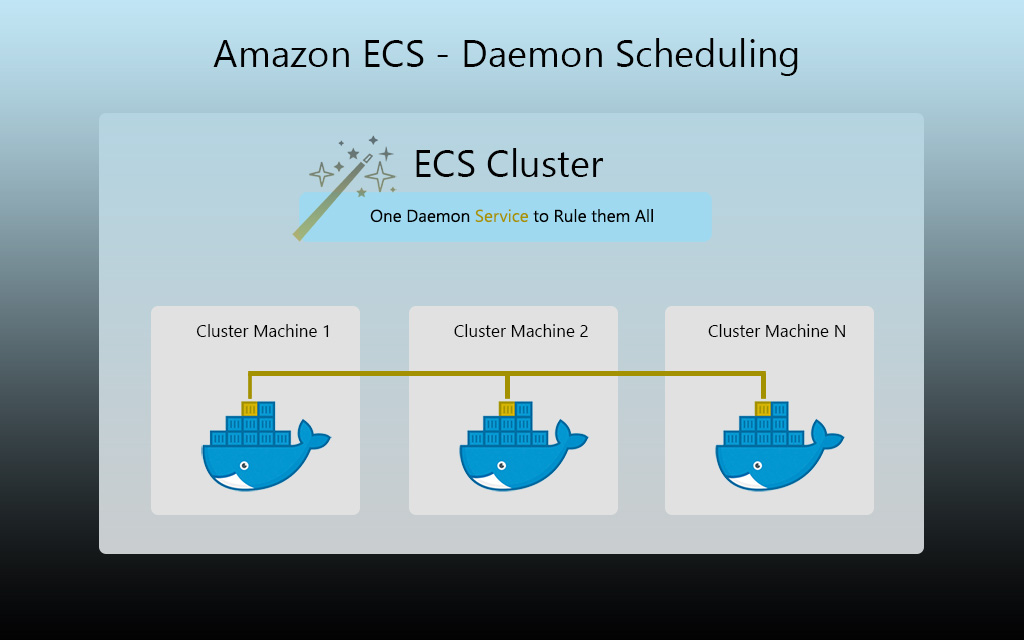

If you’re running your application services in Amazon ECS, AWS provides the foundational Memory and CPU utilization metrics you should target for monitoring right from the gate. CloudWatch is the service that provides these metrics to you, and underpins much of the alerting and autoscaling magic that’s available to you in AWS.

If you’re not running in Docker/ECS, gaining access to memory metrics for an application running on EC2 instances is a little bit more work, but with a solid monitoring agent you should be able to get memory utilization published to your monitoring platform in no time. There are also some simple cron-based scripts out there that you can deploy using user data scripts which will post memory metrics to CloudWatch for you.

In this article, I’m going to share with you a practical queue worker monitoring example which should help get you thinking about how to monitor your application. I’ll also be going into how an APM and custom application metrics can fit into your monitoring system, and how it helps save you time investigating issues, thus improving your incident resolution times.

Statistics to Use for Monitoring your Applications

The statistics you choose to leverage when building monitoring dashboards and alerting is important in order to get a comprehensive overview of how an application behaves. For starters, leveraging the average and maximum of these statistics is a good route to go.

Why CPU and Memory?

We want to understand how much memory or cpu an application uses normally, but also how much it uses when the application is under heavy load. By monitoring our core metrics, when we become aware of abnormalities in the service’s behavior, we’re given the opportunity to trace through what was happening in our application during that time. In my experience, when digging through application logs while investigating spikes, we often uncover unexpected or untested permutations of payload sizes, request types, or different code paths which make our application act on the fritz.

Sometimes, a spike could indicate a local system bottleneck, such as disk I/O, or a downstream dependent system which suddenly causes things to back up in memory. All of the potential ways applications can misbehave is interesting, which is why I think I enjoy programming and systems so much!

CloudWatch Metrics

Beyond the basics of systems monitoring, if your application is leveraging AWS services to fulfill its purpose, those resources should be monitored adequately as well. AWS provides a plethora of CloudWatch metrics for each service they offer which can serve as a foundation for monitoring your AWS dependencies.

Our job as cloud engineers is to consider that the metrics of the service we’re leveraging tells a story. If you’re not sure about what they mean, read into the AWS documentation and try to understand what type of value for a given metric would indicate your application isn’t behaving as expected.

SQS Worker Monitoring Example

Here’s an example that illustrates how we can use the SQS CloudWatch metrics namespace to tell a tale about our application performance:

Let’s say you have a microservice that processes messages from an SQS queue. In this scenario, one might want to configure monitoring and alerting on CloudWatch metrics in the SQS namespace such as:

ApproximateNumberOfMessagesVisible

Number of messages which have not yet been locked by a worker. Messages become visible again after the receive timeout expires or is programmatically expired.

An excessive queue length might indicate that the worker:

- Isn’t consuming messages fast enough and might need to scale horizontally (autoscaling)

- Is jammed or in a broken state

- Is experiencing a large influx of incoming messages

- Has a downstream dependency which is unavailable causing processing failures

ApproximateAgeOfOldestMessage

Approximate number of seconds the oldest message in the queue has been waiting for processing.

Stale messages in the queue might indicate that the worker:

- Isn’t consuming messages fast enough and might need to scale horizontally (autoscaling)

- Is jammed or in a broken state

- Is experiencing a large influx of incoming messages

- Has a downstream dependency which is unavailable causing processing failures

Note: In the ideal world, messages should be processed in a timely fashion, relative to expectations. If messages are getting stale, this could cause other application issues or degradation to the user experience (UX) if the messages relate to a customer-facing application.

NumberOfMessagesReceived

Number of messages popped out of the queue for processing, but not necessarily deleted.

- Can be used to monitor for throughput dropping under the expected threshold.

- If the worker never pulls less than X messages per 15 minutes, one might monitor for anything less than X.

- If no messages are being received, maybe a recent code change has caused an issue

NumberOfMessagesSent

Number of messages which were popped into the SQS queue.

- Useful to monitor for upstream application issues

- Useful for determining when the queue’s message producer outputs the greatest number of messages

NumberOfMessagesDeleted

The number of SQS messages that were successfully processed and removed from the queue.

- Useful to monitor for ensuring the application is processing messages at the expected rate

- Could indicate a problem if this number holds steady at 0, depending on the app

- Cross-referencable to other metrics to determine if there is an issue, and where it may lie

By implementing comprehensive monitoring of our SQS queues, in most cases, we drastically reduce the amount of time it takes to identify which system may be the culprit for the anomaly. If messages simply aren’t being popped in the queue, then it could be safe to assume that the producer (or service that creates messages in the inbound queue) has some sort of issue. If messages are sitting in the queue too long, as evident by the ApproximateAgeOfOldestMessage metric, we may need to scale out our worker. If no messages are getting deleted, this lets us know that the application perhaps may not be able to process the payloads, for various reasons. Monitoring does more than just let us know when there’s an issue. If configured properly, it can give us a clear idea of where to start investigating. As I’m sure you know, when something catches fire in production, we want to get to the bottom of the issue ASAP.

Alerting

When it comes to where to configure alerting, we have numerous options.

For a low cost but less sophisticated option, we can simply create CloudWatch Alarms based on metrics that exist in CloudWatch. Create an SNS topic and add the relevant team members as email subscribers to the topic. You can then trigger email notifications when the alarm enters an alarmed state. You’ll also want to add another notification to the alarm which sends out a follow-up email when the alarm returns to an OK state, when all is well again.

If you have access to a third-party monitoring tool like Datadog, you can configure it to pull in the CloudWatch metrics of your AWS account(s). From Datadog, you can then configure granular monitors that escalate issues via mediums such as PagerDuty, Slack, VictorOps or other incident response platforms.

Unfortunately, in most cases, setting up monitoring isn’t a one-time and you’re done kind of a scenario (we wish!). You’ll likely need to fine tune your alerting as you figure out what works and what doesn’t. If we set our alerting thresholds too aggressively, we can end up in a story like the Little Cloud that Cried Wolf. In other words, we’re seeing false alerts because our alerting is too sensitive.

A great way to test out your alerting is to enable it passively in production to see what normal metric value ranges are. Don’t go calling anyone in the middle of the night right away, but do review what might have happened if it did alert. Fine tune your thresholds based on what you’re seeing. Not every application is the same either. Some systems may experience very large spikes in inbound queue messages, whereas others may have fewer messages which take much longer to process.

Another thing I usually recommend is to run load tests against your applications with production-like traffic to see if the alerts trigger.

Monitoring and alerting is as much about knowing when something is about to break as it is about knowing when something is already broken. The goal is to be proactive rather than reactive. Consider setting up warning thresholds to let you know when things are starting to look abnormal. In the past, I’ve usually used a dedicated Slack room for project specific alerts. Throughout the day, you can keep an ear to the ground via those rooms.

Application Performance Management

Having monitoring and alerting configured around the components that surround your application is important, but it can only provide visibility to an extent. We might know that our worker isn’t processing certain messages properly, but we might not know the nature of the message types or why they’re failing.

Let’s say that 50% of the messages going into the queue end up getting deleted, but many of them are ending up in your dead letter queue (DLQ). We could sift through hundreds of payloads in the DLQ or we could integrate deeper metrics into our application to tell us exactly what failed to be processed. This is where APM and custom metrics come into play.

As an example, let’s say that your SQS worker is responsible for processing 3 types of messages: emails, reports, and account updates.

Using an APM solution that’s integrated into our codebase, we could see a detailed breakdown of what sorts of operations are being performed during each request. If the account update messages were failing to be processed, and as part of the processing operation they hit an API, we might see that the request to the API server is timing out.

Custom Application Metrics for Greater Clairvoyance

I’m a big fan of integrating custom metrics in the applications that I manage. If you’re using something like Datadog, you could use Dogstatsd to increment a counter when certain conditions occur. In lines with the above example, for email sending operations, we might publish a metric for how many confirmation emails were successfully sent and however many failed to send. From the monitoring platform, we can build dashboards to get clear insights into how our application is performing.

Publishing custom metrics has many strengths. In the case of a load balanced application in AWS, you may have noticed that AWS doesn’t discern a 401 error from a 403 error in their ELB metrics. To that point, publishing more granular status code results is quite valuable since in modern APIs, throwing a 401 may totally be the right thing to do. Having these metrics in Datadog or another monitoring platform will enable you to, again, have a great idea right off that bat what might be going wrong, without needing to dig around through ELB access logs, etc.

I hope you’ve found this article on monitoring your applications informative, and I’d like to invite you to check out our product, Clouductivity Navigator – a Chrome Extension to improve your productivity in AWS. It helps you get to the documentation and AWS service pages you need, without clicking through the console. Download your free trial today!

-- About the Author

About the Author

Marcus Bastian is a Senior Site Reliability Engineer that works with AWS and Google Cloud customers to implement, automate, and improve the performance of their applications.

Find his LinkedIn here.